Diffusion Model Clearly Explained!

How does AI artwork work? Understanding the tech behind the rise of AI-generated art.

![Jason Allen’s AI-generated work, “Théâtre D’opéra Spatial,” won first place in the digital category at the Colorado State Fair. [1]](https://codoraven.com/app/uploads/2023/02/01_featured_image-10-1024x587.jpg)

Originally posted on My Medium.

The rise of the Diffusion Model can be regarded as the main factor for the recent breakthrough in the AI generative artworks field.

In this article, I’m going to explain how it works with illustrative diagrams.

Overview

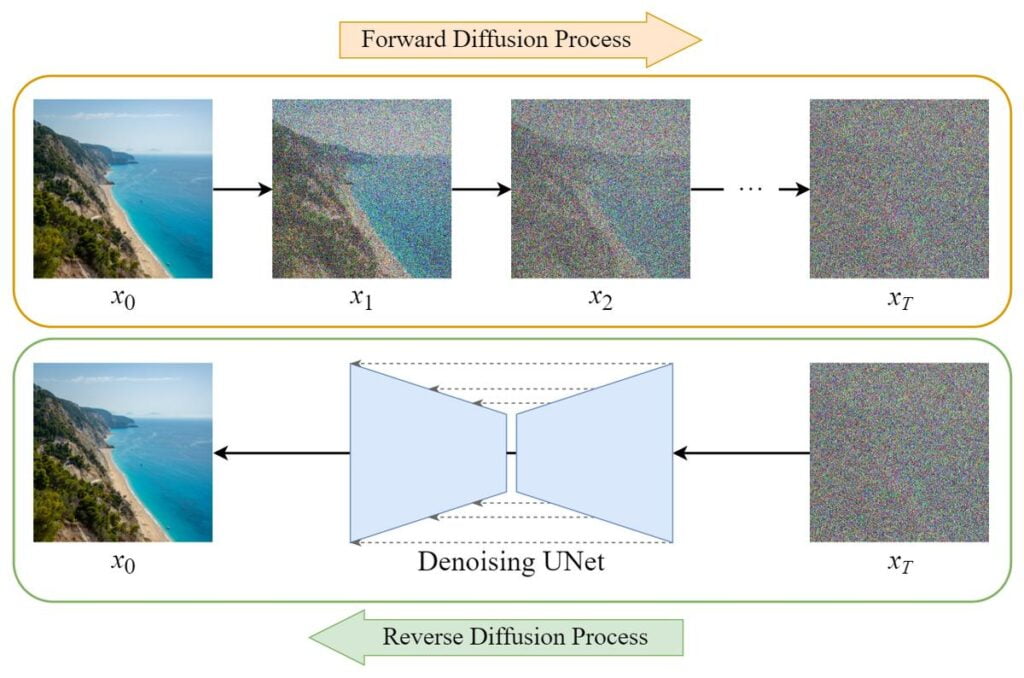

The training of the Diffusion Model can be divided into two parts:

- Forward Diffusion Process → add noise to the image.

- Reverse Diffusion Process → remove noise from the image.

Forward Diffusion Process

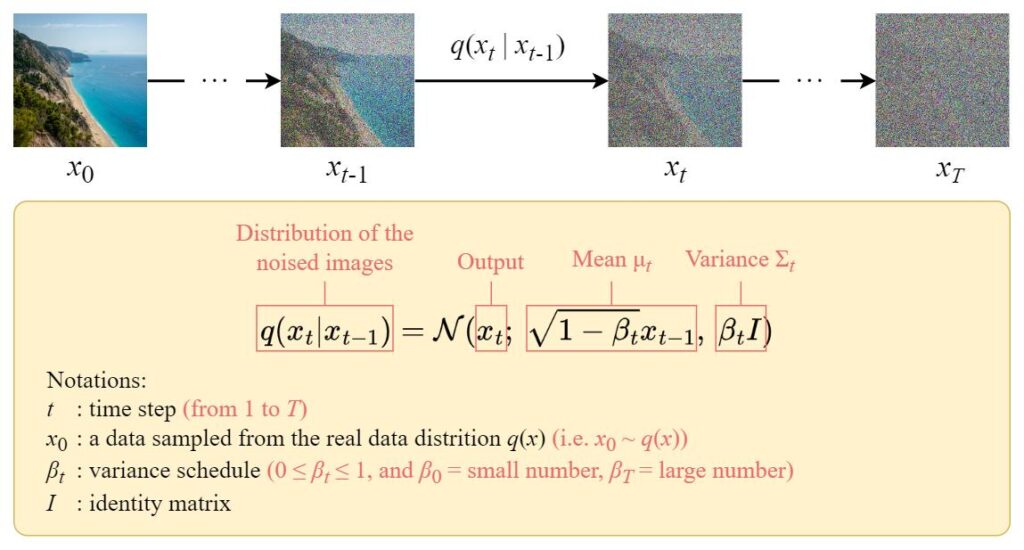

The forward diffusion process gradually adds Gaussian noise to the input image x_0 step by step, and there will be T steps in total. the process will produce a sequence of noisy image samples x_1, \dots, x_T.

When T \to \infty, the final result will become a completely noisy image as if it is sampled from an isotropic Gaussian distribution.

But instead of designing an algorithm to iteratively add noise to the image, we can use a closed-form formula to directly sample a noisy image at a specific time step t.

Closed-Form Formula

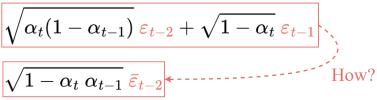

The closed-form sampling formula can be derived using the Reparameterization Trick.

\begin{align*}

&\text{If} &&z \sim \mathcal{N}(\mu, \sigma^2) &&\text{then} \\

& &&z = \mu + \sigma \varepsilon &&\text{where}\ \varepsilon \sim \mathcal{N}(0,1)

\end{align*}

Reparameterization trick

With this trick, we can express the sampled image x_t as follows:

x_t = \sqrt{1-\beta_t}\ x_{t-1} + \sqrt{\beta_t}\ \varepsilon_{t-1} Express x_t using the reparameterization trick

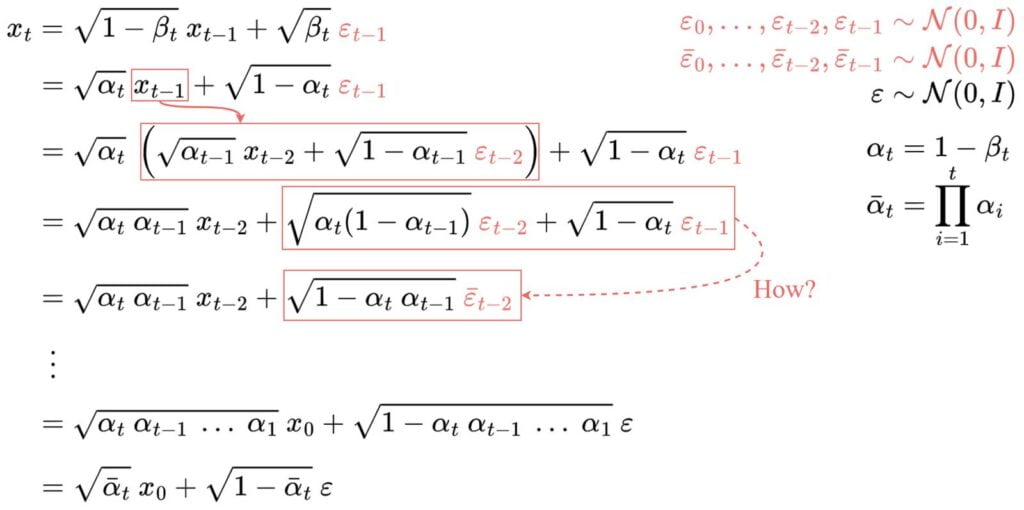

Then we can expand it recursively to get the closed-form formula:

Note:

all the \boldsymbol{\varepsilon} are i.i.d. (independent and identically distributed) standard normal random variables.It is important to distinguish them using different symbols and subscripts because they are independent and their values could be different after sampling.

But how do we jump from line 4 to line 5?

Some people find this step difficult to understand. Here I will show you how it works:

Let’s denote these two terms using X and Y. They can be regarded as samples from two different normal distributions. i.e. X \sim \mathcal{N}(0, \alpha_t(1-\alpha_{t-1})I) and Y \sim \mathcal{N}(0, (1-\alpha_t)I).

Recall that the sum of two normally distributed (independent) random variables is also normally distributed. i.e. \text{If}\ Z = X + Y,\ \text{then}\ Z \sim \mathcal{N}(0, \sigma_X^2+\sigma_Y^2).

Therefore we can merge them together and express the merged normal distribution in the reparameterized form. This is how we combine the two terms.

Repeating these steps will give us the following formula which depends only on the input image x_0:

x_t = \sqrt{\bar{\alpha}_t}\ x_0 + \sqrt{1-\bar{\alpha}_t}\ \varepsilonThe closed-form formula

Now we can directly sample x_t at any time step using this formula, and this makes the forward process much faster.

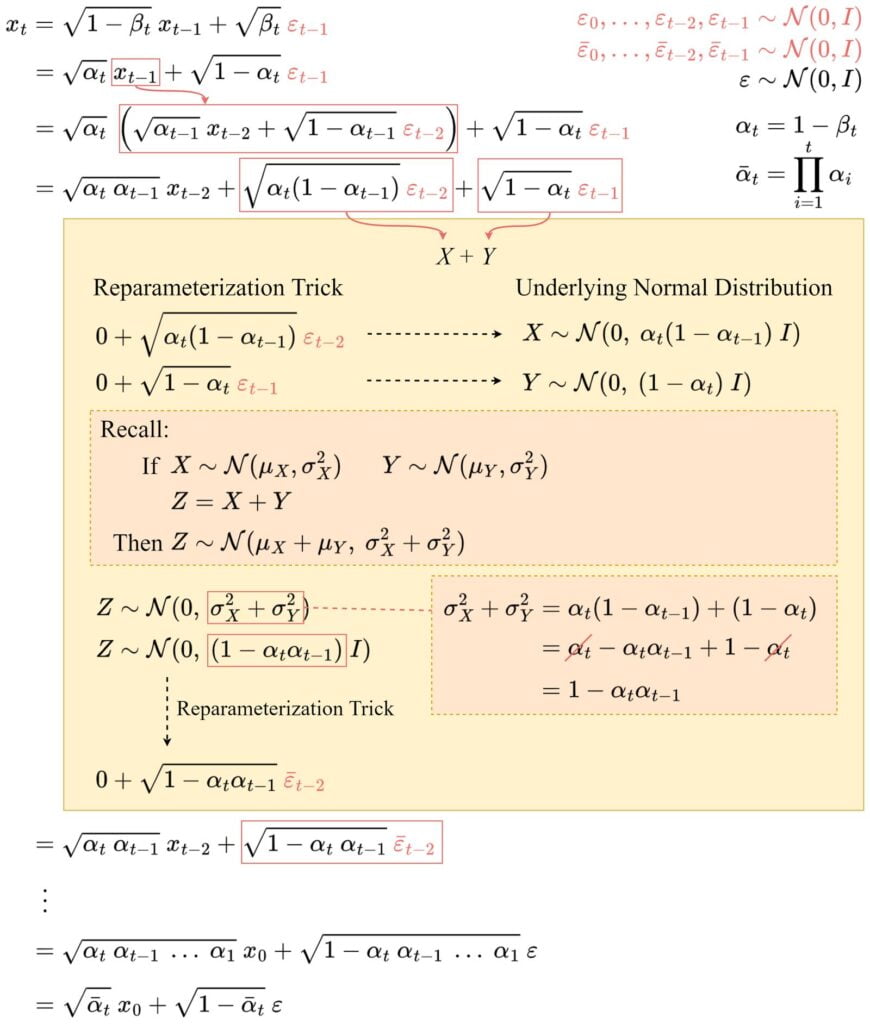

Reverse Diffusion Process

Unlike the forward process, we cannot use q(x_{t-1}|x_t) to reverse the noise since it is intractable (uncomputable).

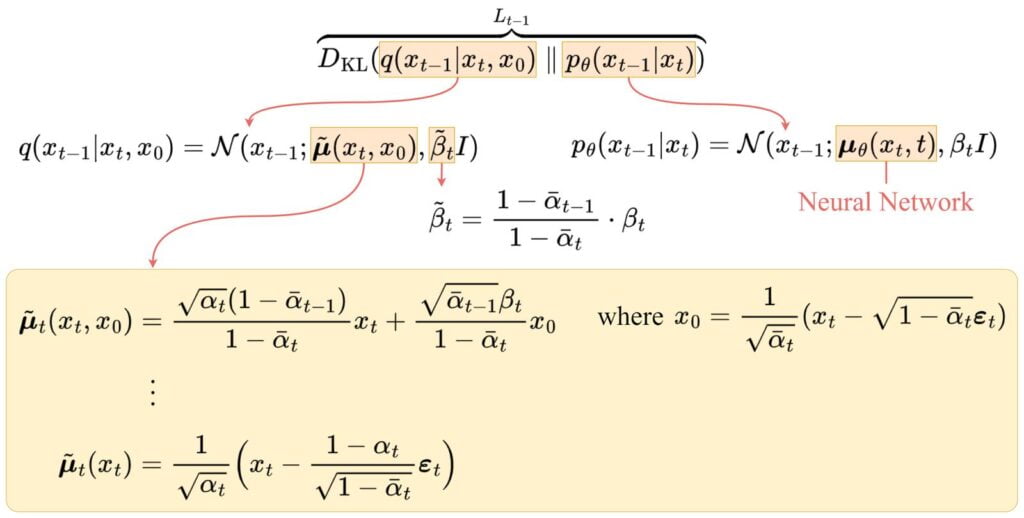

Thus we need to train a neural network p_\theta(x_{t-1}|x_t) to approximate q(x_{t-1}|x_t). The approximation p_\theta(x_{t-1}|x_t) follows a normal distribution and its mean and variance are set as follows:

\begin{cases}

\mu_\theta(x_t, t) &:=\ \ \tilde{\mu}_t(x_t, x_0) \\

\Sigma_\theta(x_t, t) &:=\ \ \tilde{\beta}_t I

\end{cases}mean and variance of p_\theta

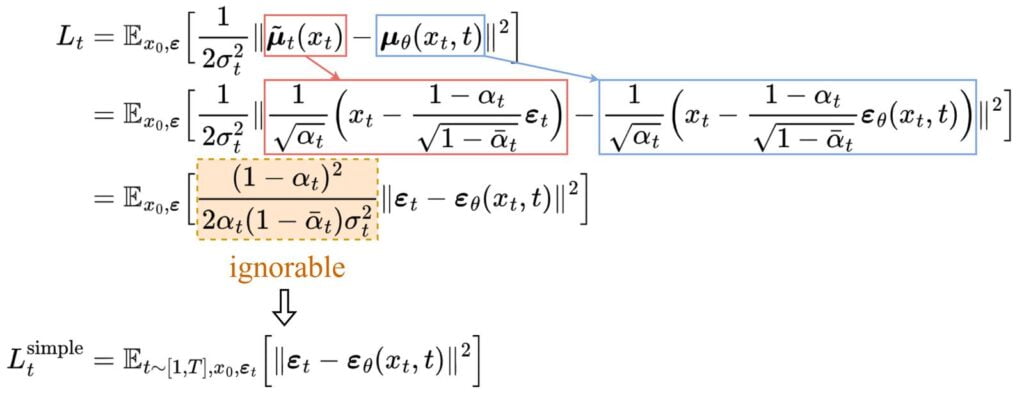

Loss Function

We can define our loss as a Negative Log-Likelihood:

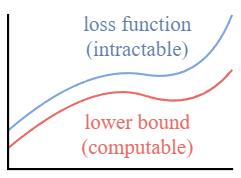

This setup is very similar to the one in VAE. instead of optimizing the intractable loss function itself, we can optimize the Variational Lower Bound.

By optimizing a computable lower bound, we can indirectly optimize the intractable loss function.

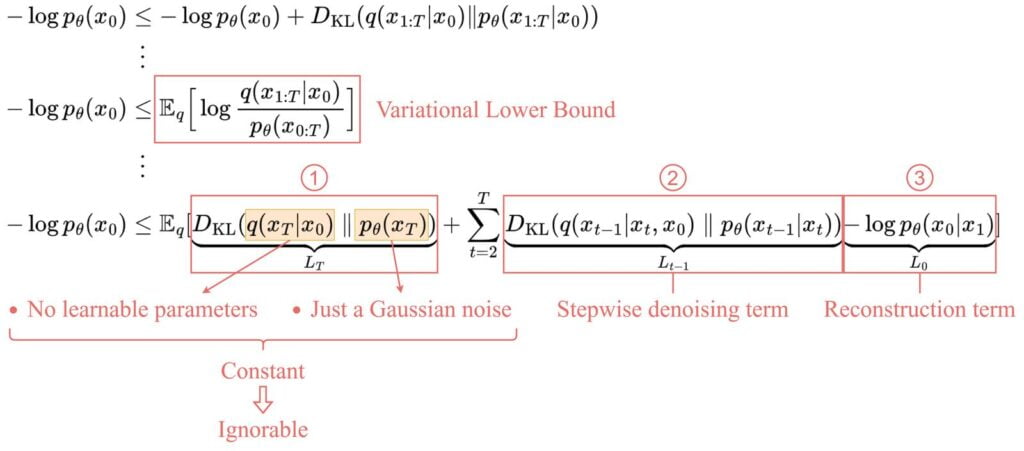

By expanding the variational lower bound, we found that it can be represented with the following three terms:

1. L_T: Constant term

Since q has no learnable parameters and p is just a Gaussian noise probability, this term will be a constant during training and thus can be ignored.

2. L_{t-1}: Stepwise denoising term

This term compares the target denoising step q and the approximated denoising step p_\theta.

Note that by conditioning on x_0, the q(x_{t-1}|x_t, x_0) becomes tractable.

After a series of derivations, the mean \tilde{\boldsymbol{\mu}}_t of q(x_{t-1}|x_t, x_0) is shown above.

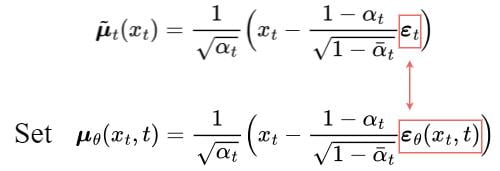

To approximate the target denoising step q, we only need to approximate its mean using a neural network. So we set the approximated mean \boldsymbol{\mu}_\theta to be in the same form as the target mean \tilde{\boldsymbol{\mu}}_t (with a learnable neural network \boldsymbol{\varepsilon}_\theta):

The comparison between the target mean and the approximated mean can be done using a mean squared error (MSE):

Experimentally, better results can be achieved by ignoring the weighting term and simply comparing the target and predicted noises with MSE.

So, it turns out that to approximate the desired denoising step q, we just need to approximate the noise \boldsymbol{\varepsilon}_t using a neural network \boldsymbol{\varepsilon}_\theta.

3. L_0: Reconstruction term

This is the reconstruction loss of the last denoising step and it can be ignored during training for the following reasons:

- It can be approximated using the same neural network in L_{t-1}.

- Ignoring it makes the sample quality better and makes it simpler to implement.

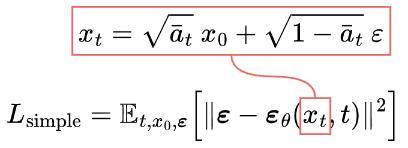

Simplified Loss

So the final simplified training objective is as follows:

We find that training our models on the true variational bound yields better codelengths than training on the simplified objective, as expected, but the latter yields the best sample quality. [2]

The U-Net Model

Dataset

In each epoch:

- A random time step t will be selected for each training sample (image).

- Apply the Gaussian noise (corresponding to t) to each image.

- Convert the time steps to embeddings (vectors).

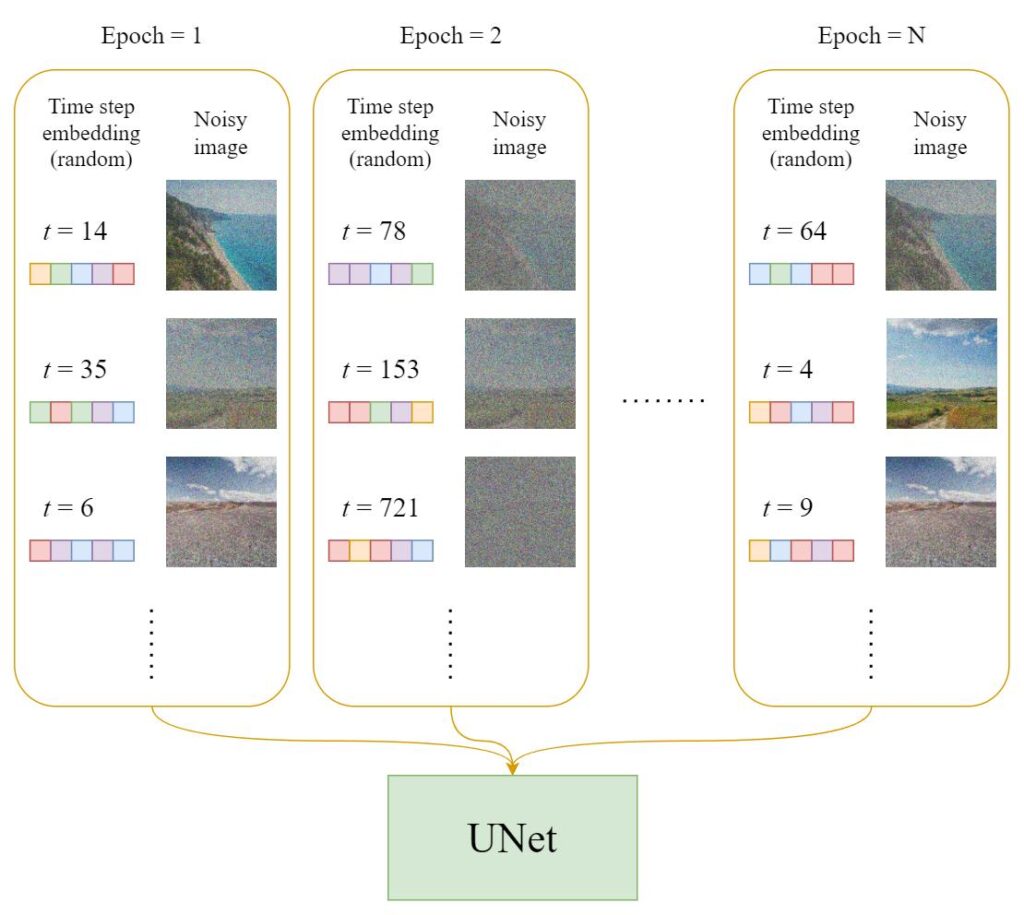

Training

The official training algorithm is as above, and the following diagram is an illustration of how a training step works:

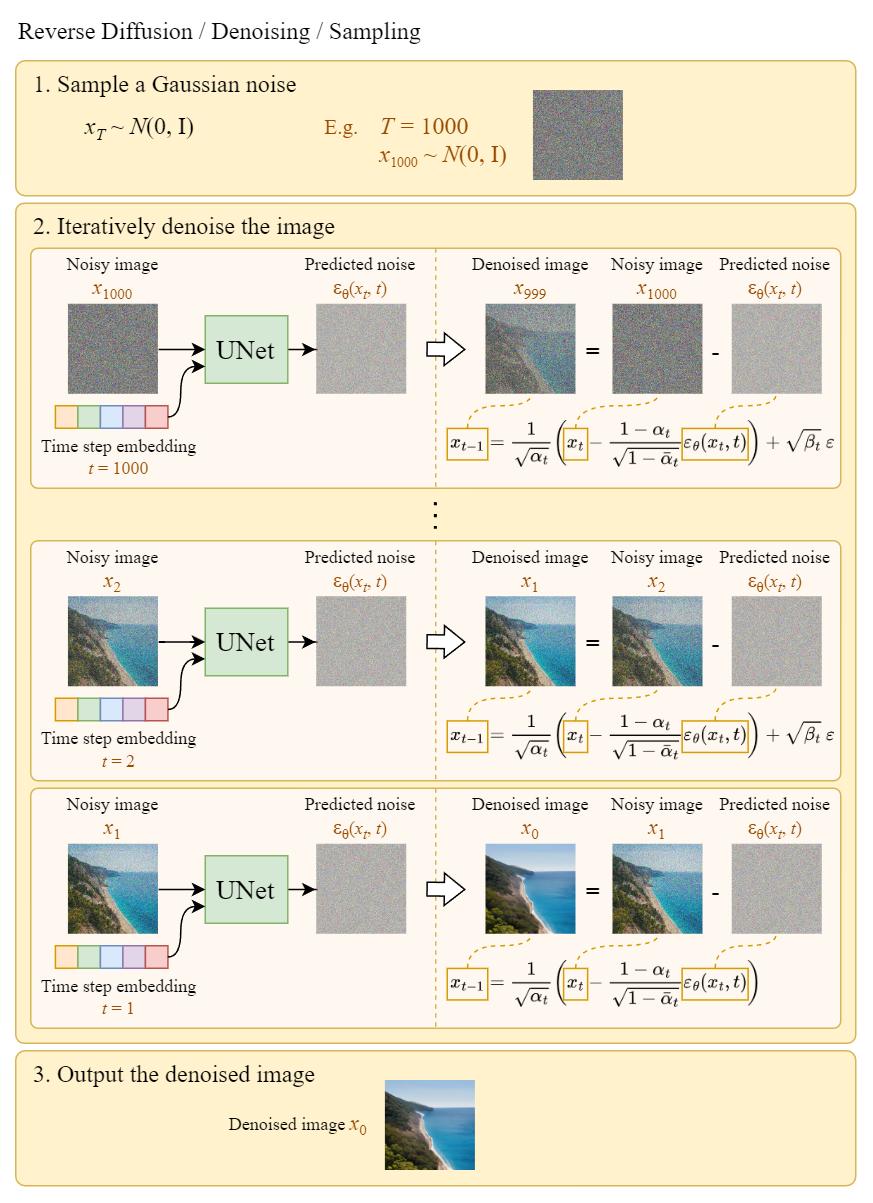

Reverse Diffusion

We can generate images from noises using the above algorithm. The following diagram is an illustration of it:

Note that in the last step, we simply output the learned mean \boldsymbol{\mu}_\theta(x_1, 1) without adding the noise to it.

Summary

Here are some main takeaways from this article:

- The Diffusion model is divided into two parts: forward diffusion and reverse diffusion.

- The forward diffusion can be done using the closed-form formula.

- The backward diffusion can be done using a trained neural network.

- To approximate the desired denoising step q, we just need to approximate the noise \boldsymbol{\varepsilon}_t using a neural network \boldsymbol{\varepsilon}_\theta.

- Training on the simplified loss function yields better sample quality.

References

[1] K. Roose, “An a.i.-generated picture won an art prize. artists aren’t happy.,” The New York Times, 02-Sep-2022. [Online]. Available: https://www.nytimes.com/2022/09/02/technology/ai-artificial-intelligence-artists.html.

[2] J. Ho, A. Jain, and P. Abbeel, “Denoising Diffusion Probabilistic models,” arXiv.org, 16-Dec-2020. [Online]. Available: https://arxiv.org/abs/2006.11239.

[3] N. A. Sergios Karagiannakos, “How diffusion models work: The math from scratch,” AI Summer, 29-Sep-2022. [Online]. Available: https://theaisummer.com/diffusion-models.

[4] L. Weng, “What are diffusion models?,” Lil’Log, 11-Jul-2021. [Online]. Available: https://lilianweng.github.io/posts/2021-07-11-diffusion-models/.

[5] A. Seff, “What are diffusion models?,” YouTube, 20-Apr-2022. [Online]. Available: https://www.youtube.com/watch?v=fbLgFrlTnGU.

[6] Outlier, “Diffusion models | paper explanation | math explained,” YouTube, 06-Jun-2022. [Online]. Available: https://www.youtube.com/watch?v=HoKDTa5jHvg.

![Training algorithm [2]](https://codoraven.com/app/uploads/2023/02/16_algo_training.jpg)

![Sampling algorithm [2]](https://codoraven.com/app/uploads/2023/02/18_algo_sampling.jpg)